Last Friday the OpenCitations blog published a guest post by Alberto Martín-Martín that describes the coverage by COCI and other open citation data compared to subscription citation indexes. This is an important blog post, as it changes how we think about citations and open metrics.

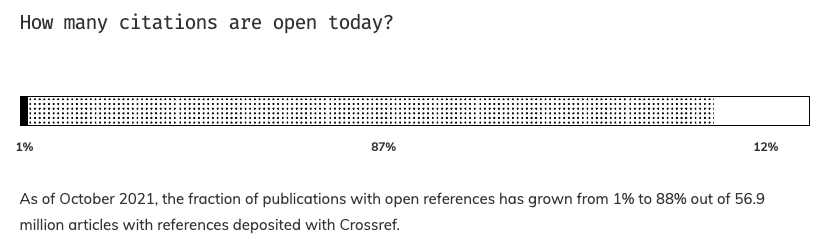

COCI is the OpenCitations Index of Crossref open DOI-to-DOI citations, based on the open references made available by Crossref in the context of the Initiative for Open Citations (I4OC). As of October 2021, 88% of the 56.9 million articles with references deposited with Crossref have open references.

I4OC, together with Crossref members, Crossref, and OpenCitations has come a long way since it started in 2017, and I am proud that I played a small role in getting I4OC started. Please keep in mind that not all Crossref members submit references for their DOIs. Crossref has issued 128 million DOIs for scholarly content to date, including 91 million DOIs for journal articles. Also, Crossref is including these open references in its REST API, but is currently not making the processed references (aggregating them by cited DOI) openly available. The Crossref REST API provides the citation count as referenced-by-count, to see the actual references you need to either be a Crossref member participating in the Crossref cited-by service, or use COCI and other open citation indexes.

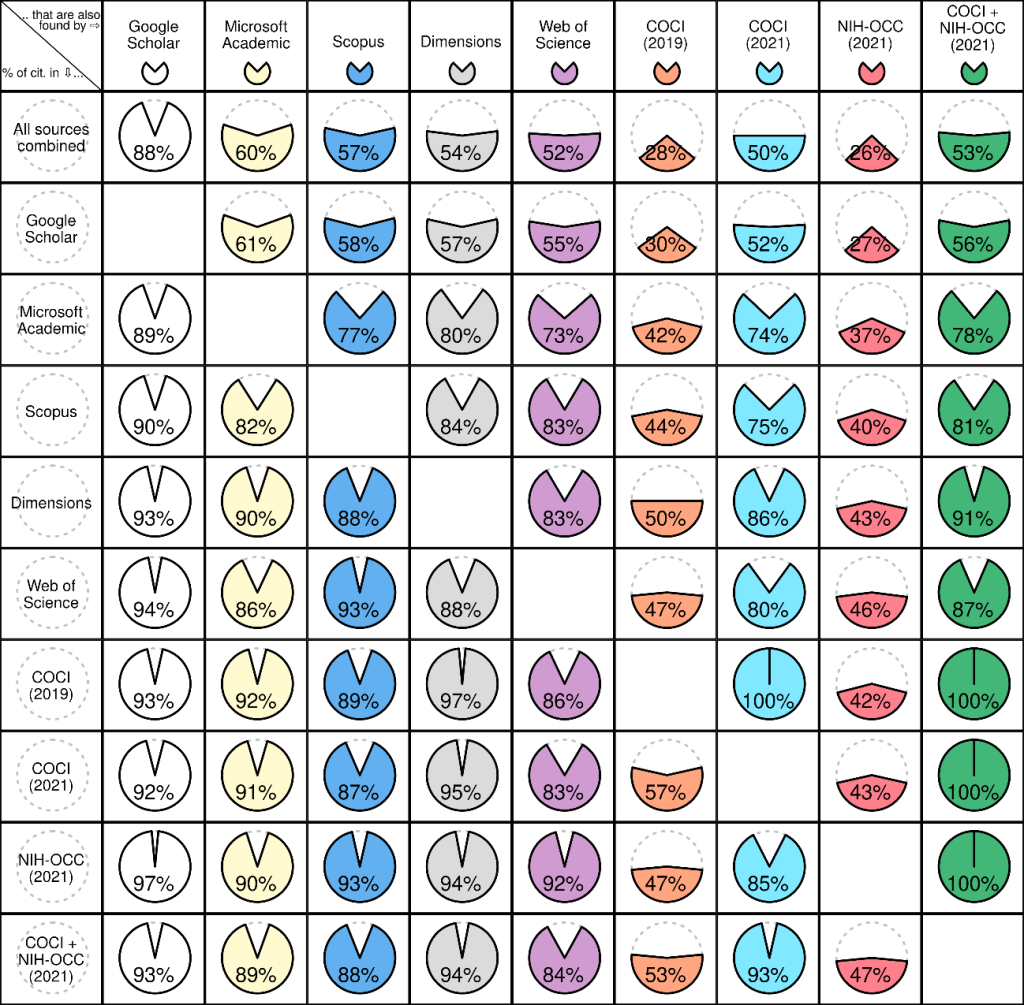

With the number of open references closing in on 90%, this is now a good time to take a closer look at the coverage of these open citation data compared to other available citation indexes and compare the results to the situation in 2019. And this is exactly what Alberto Martín-Martín and colleagues have done. Their 2019 analysis was published in 2020 (Martín-Martín et al. 2020) and looked at 2,515 highly-cited English-language documents published in 2006 from 252 subject categories, and the citations to these publications found in the following services:

In the 2020 publication, Google Scholar found the most citations with 88%, and COCI found the least citations with 28% (and Microsoft Academic 60%, Scopus 57%, Dimensions 54%, and WoS 52%). The new analysis done for COCI with August 2021 Crossref data for the same 2,515 papers published in 2006, and for citing papers published until 2019 shows a significant difference, with COCI now covering 50% of citations, or 53% when combined with the NIH Open Citation Collection, another index of open citations.

These findings change everything, as coverage by open citation indexes is now comparable to the commercial services Web of Science, Scopus and Dimensions. Microsoft announced in May that Microsoft Academic will retire at the end of 2021. And Google Scholar plays a special role, as it has the best coverage, but no API access (free or paid), making it basically impossible to build services on top of Google Scholar. A rather unusual decision by Google (compare to e.g. Google Maps) that can only be explained by existing license agreements with publishers.

The analysis was done using 2,515 papers published in 2006, and there are small differences between service providers depending on the subject area. As this was an analysis based on Crossref DOIs, the analysis misses citing publications not registered with Crossref, or not making their references available to Crossref. And the analysis focused on coverage, not looking at other aspects of the services provided. More work needs to happen in the coming years on these topics.

But the main conclusion is very clear: open citation indexes are comparable in terms of coverage to subscription citation services, and the decision to use a subscription service should be based on convenience rather than coverage. I can't emphasize enough the importance of this critical milestone, and I congratulate Alberto Martín-Martín and his team on doing this work.

I would not be surprised to see comments and publications in the coming weeks and months arguing with these findings. This reminds me of a discussion we had about 15 years ago comparing the reliability of Wikipedia compared to the Encyclopedia Britannica, started by a study published in Nature (Giles 2015, Internet encyclopaedias go head to head). I fully expect the final outcome here to be similar.

References

- Shotton D. Coverage of open citation data approaches parity with Web of Science and Scopus. OpenCitations blog. Published October 27, 2021. Accessed July 2, 2023. https://opencitations.wordpress.com/2021/10/27/coverage-of-open-citation-data-approaches-parity-with-web-of-science-and-scopus/

- Martín-Martín A, Thelwall M, Orduna-Malea E, Delgado López-Cózar E. Google Scholar, Microsoft Academic, Scopus, Dimensions, Web of Science, and OpenCitations’ COCI: a multidisciplinary comparison of coverage via citations. Scientometrics. 2021;126(1):871-906. doi:10.1007/s11192-020-03690-4

- Giles J. Internet encyclopaedias go head to head. Nature. 2005;438(7070):900-901. doi:10.1038/438900a