Open access to research data is becoming increasingly important, as manifested by memos or press releases from the Wellcome Trust, the European Commission, and the the Office of Science and Technology Policy (OSTP) from the White House.

Open access to research data is important as this makes it easier for other researchers to reproduce the research, and to build upon the research by others by re-analysis of data or combination with other research data. In other words, Science as an open enterprise.

The major challenge to open access to research data is that data sharing is not a widespread practice. Several strategies have been developed to create incentives for researchers to share research data, including services that make it easier to share research data (e.g. figshare, DataUp and Zenodo), metrics for research data, and data journals such as Earth System Science Data, GigaScience or the Journal of open archaeology data. Some of the sticks that have been tried in addition to the carrots above include data management plan requirements such as those set forth by the National Science Foundation (NSF) in 2011.

I would argue that all these carrots and sticks will eventually fall short, unless we redefine what the journal article (and similarly monograph) in the digital age should be about. Research data should become a required part of any research article, rather than an optional afterthought, or taking on a life on their own in a separate data journal.

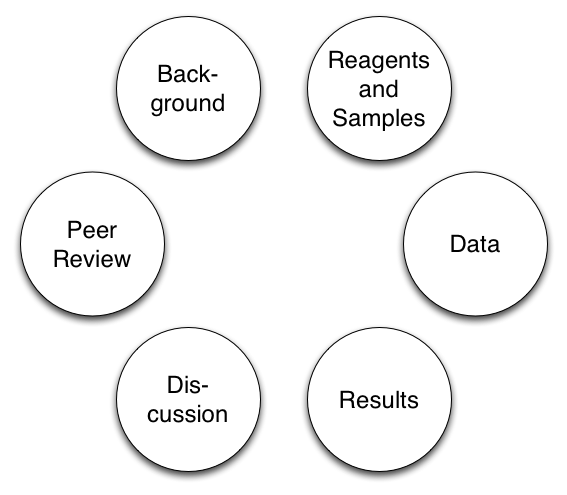

The complete article - as I would like to call this journal article made fit for the digital age - should not only include the research data used to create figures and tables and reportes as results. Equally important are descriptions of reagents, workflows and software tools that go into much more detail compared to what is common practice today.

The complete article does not have to come as one big file. More likely the research data will be hosted at one or more data centers elsewhere. Authorship will turn into contributorship and will include all roles required to put the complete article together, including for example data collection and -analysis, and writing software needed to analyze the data. The complete article can be shorter or longer than the typical article today, important is not article length, but the combination of text, data, and description of reagents and analysis tools.

The complete article should also include (or link to) the text of the peer reviews and previous article versions, including preprints. This makes it much easier to understand the article (and the data) in context. The complete article should also link to article-level metrics post-publication for similar reasons.

This idea of a complete article is not too far away from the best practices used today, but it is important to make it the default for scientific publication. Too much of what we publish today is still centered around the concept of what can be printed on paper, and telling exciting stories that have impact counts more than telling complete stories that can be reproduced.